A new analysis of the evolving role of artificial intelligence in software development reveals that AI coding tools have become a double-edged sword for open-source programs, unleashing a surge of automatically generated submissions that often lack the quality and maintainability required by serious projects; while these tools make writing new features easier than ever and can accelerate work for skilled developers, project maintainers are struggling with an overwhelming volume of low-quality merge requests that strain limited volunteer resources and threaten to fragment software ecosystems, forcing teams to reconsider contribution guidelines and adoption practices in order to protect stability, uphold quality standards, and balance innovation with long-term upkeep.

Sources

https://techcrunch.com/2026/02/19/for-open-source-programs-ai-coding-tools-are-a-mixed-blessing/

https://tech.yahoo.com/ai/articles/open-source-programs-ai-coding-140000153.html

https://www.techbuzz.ai/articles/ai-coding-tools-flood-open-source-with-low-quality-code

Key Takeaways

• AI coding tools have significantly lowered entry barriers for code submission, resulting in an influx of automated, low-quality contributions that burden open-source maintainers.

• While experienced developers can leverage AI tools to accelerate feature creation and refactoring, the balance between quantity and quality is tipping toward unsustainable workloads for volunteer reviewers.

• The open-source community is beginning to adapt by exploring stricter contribution policies and tools to verify code quality and manage AI-generated submissions.

In-Depth

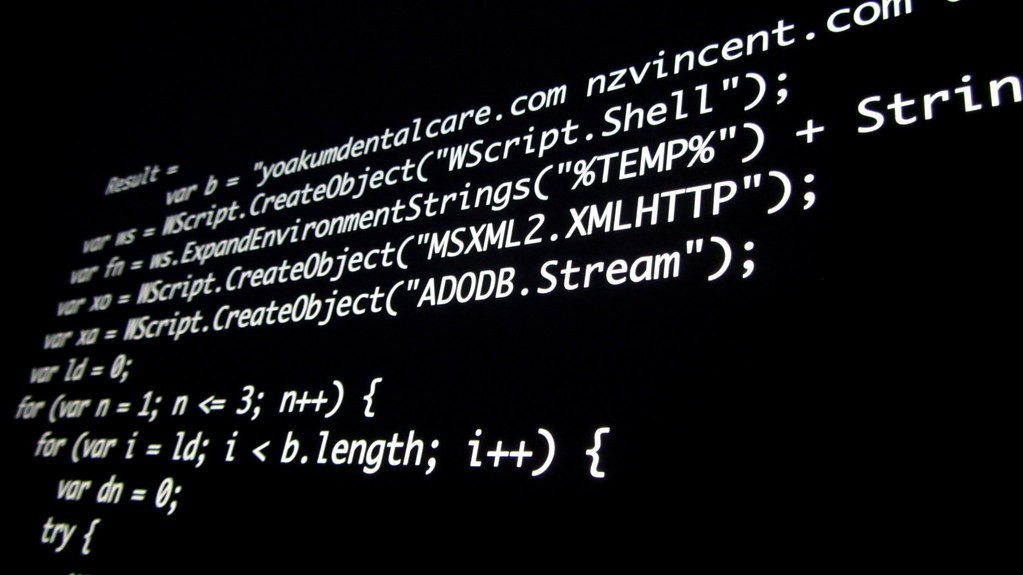

Artificial intelligence coding assistants have rapidly shifted from promising productivity boosters to contentious fixtures in the world of open-source software. According to recent reporting and analysis on this trend, AI coding tools such as automated merge request generators and suggestion engines have been embraced for their ability to streamline common tasks and assist developers with repetitive coding duties. However, the same accessibility that makes these tools attractive also introduces significant challenges to large, crowdsourced codebases. As described in a detailed article from February 2026, project maintainers are increasingly encountering a deluge of AI-generated submissions that fail to meet the quality standards necessary to keep complex projects healthy and stable. These submissions, while technically functional on the surface, often lack architectural insight, coherent documentation, or consideration for larger design principles, forcing maintainers to spend disproportionate time reviewing and rejecting them instead of advancing project goals.

The core tension resides in the nature of open-source development itself: unlike commercial software organizations with dedicated engineering teams, open-source efforts depend heavily on voluntary contributors and a relatively small group of maintainers. When AI tools flood the contribution pipeline with low-quality code, the friction of review increases and the community’s scarce resources are diverted away from meaningful progress. Long-time contributors have lamented that this dynamic not only slows development but also discourages participation, as seasoned developers find themselves wading through a sea of trivial or misguided patches. In response to this strain, some projects are experimenting with new governance models—such as requiring contributors to be “vouched” before their merge requests are considered—while others are crafting explicit guidelines for acceptable uses of AI in code generation.

Despite these challenges, there remains acknowledgment that AI tools have real potential benefits when employed judiciously by experienced engineers. For example, when skilled maintainers harness automation to port code between platforms or generate well-defined components, the efficiency gains can be significant. The divide between high and low-quality outcomes underscores a broader point: AI does not inherently degrade or improve open-source development, but it does change the calculus of collaboration. Open-source communities are thus confronting a learning curve—not simply about how to use technology, but how to govern contributions, set quality expectations, and preserve the collective expertise that has sustained these projects for decades. This debate reflects a broader challenge in technology adoption: ensuring that powerful automation enhances, rather than undermines, the human craftsmanship at the heart of complex software creation.