Scientists at CERN are increasingly turning to artificial intelligence in an effort to challenge and re-evaluate the core theories that underpin modern particle physics, reflecting a growing recognition that current models, including the widely accepted Standard Model, remain incomplete despite decades of experimental validation. Researchers working with the Large Hadron Collider—where particles are smashed together at extraordinary energies—have found that the prevailing theories continue to hold up under experimental scrutiny, yet that very consistency has created a paradox: without discovering cracks in the existing framework, physicists struggle to advance toward new physics that could explain mysteries such as dark matter or the imbalance between matter and antimatter. Artificial intelligence is now being deployed not merely to analyze massive datasets generated by collider experiments but to identify patterns, anomalies, or conceptual pathways that human researchers may overlook, potentially guiding scientists toward entirely new theoretical frameworks that could redefine our understanding of the universe.

Sources

https://www.semafor.com/article/03/02/2026/cern-scientists-use-ai-to-reevaluate-physics-theories

https://techstartups.com/2026/03/03/top-tech-news-today-march-3-2026-2/

https://physicsworld.com/a/using-ai-to-find-new-particles-at-the-lhc/

Key Takeaways

- Artificial intelligence is being used not only to analyze particle-collision data but also to help physicists challenge the assumptions behind existing theories and potentially uncover entirely new models of physics.

- The continued success of current theories in explaining collider results has paradoxically slowed theoretical breakthroughs, prompting researchers to use AI to search for overlooked anomalies or hidden patterns.

- AI-driven analysis could accelerate the discovery of “new physics,” potentially helping explain unresolved cosmic puzzles such as dark matter, neutrino masses, and the imbalance between matter and antimatter.

In-Depth

For decades, particle physics has operated under a powerful yet incomplete framework known as the Standard Model—a set of equations and principles that explain how fundamental particles and forces interact. It has delivered remarkable predictive success, culminating in the discovery of the Higgs boson in 2012, which confirmed the mechanism by which particles acquire mass. Yet despite these achievements, the Standard Model leaves major cosmic questions unanswered. It cannot explain dark matter, the tiny but measurable masses of neutrinos, or why the universe contains more matter than antimatter.

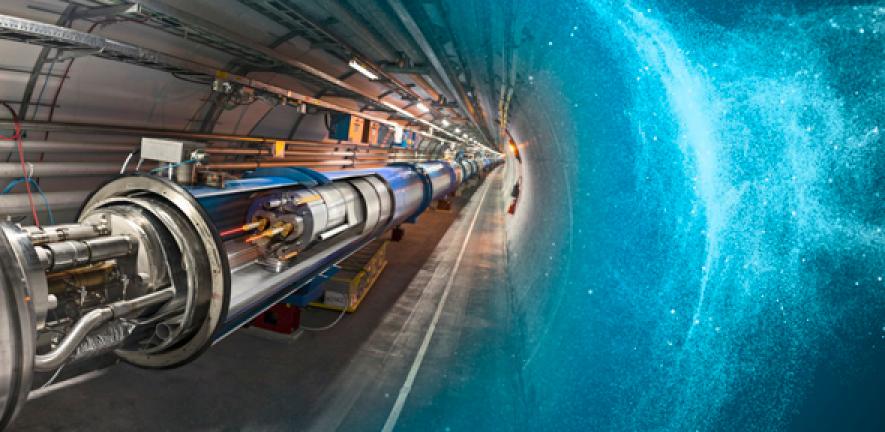

This is where artificial intelligence is beginning to play a transformative role. At CERN, the world’s premier particle physics laboratory, scientists are now deploying advanced machine-learning systems to sift through the enormous quantities of data generated by the Large Hadron Collider. Each collision inside the LHC produces a cascade of subatomic debris, and the collider generates millions of such events every second. Human researchers have long relied on statistical tools and theoretical predictions to analyze this data, but AI systems can examine patterns across enormous datasets at a scale and speed that far exceeds traditional methods.

The shift toward AI represents more than just a technological upgrade; it reflects a philosophical change in how physicists approach discovery. Historically, scientific progress in particle physics has depended on formulating theories first and then designing experiments to test them. Today, however, AI systems are increasingly capable of identifying correlations and anomalies without prior assumptions about what scientists expect to see. In practical terms, this means machine-learning models could potentially reveal patterns in collider data that suggest new physical laws—patterns that human researchers might miss because they are constrained by existing theoretical frameworks.

This approach has gained momentum partly because the Large Hadron Collider has produced a puzzling result: the existing theories work too well. The collider’s experiments have repeatedly confirmed the predictions of the Standard Model, leaving physicists without the clear discrepancies that historically triggered major theoretical breakthroughs. The discovery of the Higgs boson, while groundbreaking, effectively completed the Standard Model rather than overturning it. As a result, researchers now find themselves in an unusual position—searching for evidence that their own theories might be incomplete or flawed.

Artificial intelligence offers a new strategy for that search. Machine-learning algorithms can evaluate competing theoretical possibilities, simulate potential particle interactions, and flag unexpected deviations in experimental results. In some cases, these systems can even reconstruct particle collisions with greater precision than traditional analytical techniques, helping scientists extract more information from each experimental event.

The long-term implications could be profound. If AI systems help uncover subtle inconsistencies in existing models, they may point physicists toward entirely new theories that expand or replace the Standard Model. Such breakthroughs could illuminate the nature of dark matter, reveal previously unknown particles, or even reshape our understanding of the universe’s earliest moments.

From a broader perspective, the growing partnership between human scientists and artificial intelligence represents a natural evolution in scientific discovery. Throughout history, new instruments—from telescopes to particle accelerators—have expanded humanity’s ability to observe the universe. Today, AI may be emerging as the next essential tool in that lineage, enabling researchers to navigate the increasingly complex frontier of modern physics. In an era when the biggest discoveries may lie hidden within mountains of data, the ability of machines to detect the unexpected could prove indispensable in pushing science beyond its current limits.